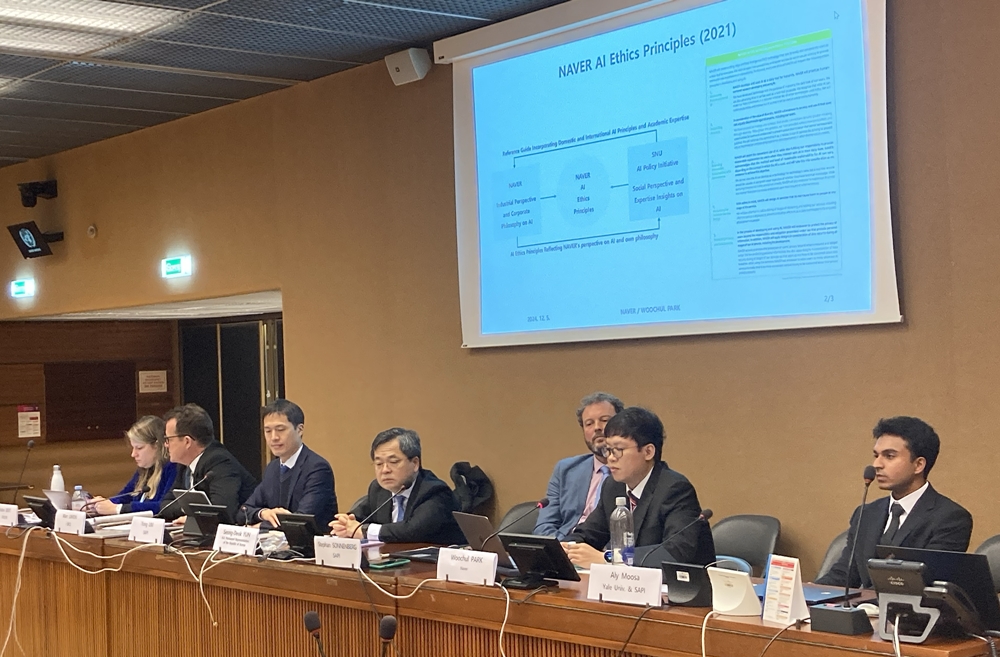

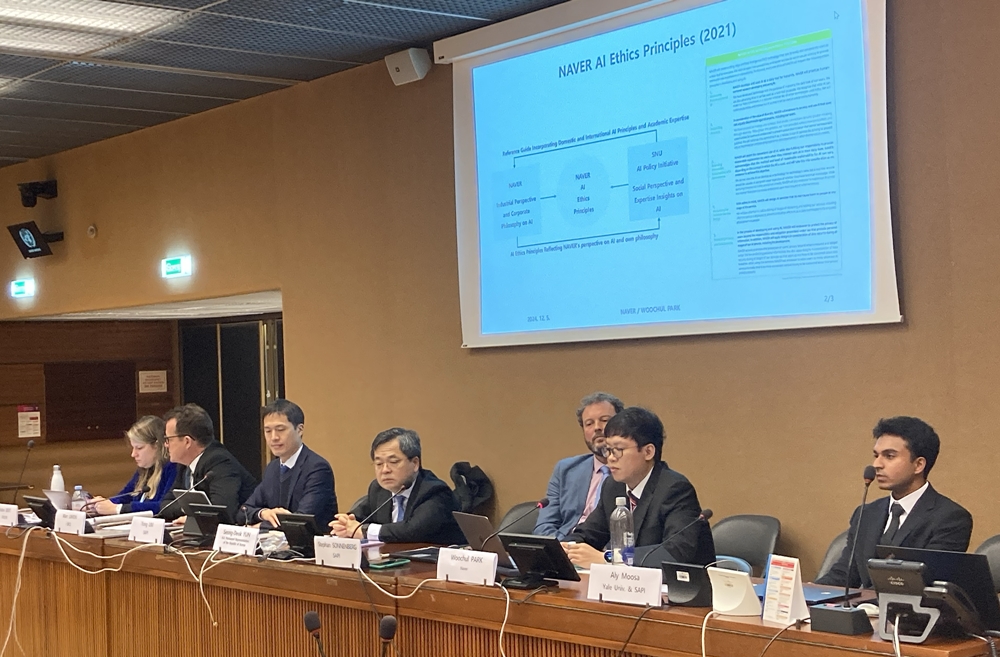

Woochul Park, Naver’s Policy/RM Agenda Counsel (second from the right), presents Naver’s AI safety policy practices. | Photo by Naver

Naver Corp. (CEO Soo Yeon Choi) recently participated in the UN event “Towards a Human Rights-Based Approach to New and Emerging Technologies: From Concept to Implementation” at the UN Geneva Office in Switzerland. The company shared its efforts to create a safer AI ecosystem by translating ethical AI principles into actionable industry policies.

The Permanent Mission of the Republic of Korea (to the United Nations Office) in Geneva, the Seoul National University AI Policy Initiative (SAPI), and the Universal Rights Group (URG) co-hosted the event. Since 2022, SAPI has conducted in-depth research on human rights-based approaches to new technologies and published annual reports. This year’s event focused on applying human rights norms in practice, featuring presentations from key stakeholders, including Ambassador Seong Deok Yun, Professors Yong Lim and Stephen Sonnenberg, and UN human rights officials.

During the event, Naver showcased its real-world applications of AI safety principles. Woochul Park, Naver’s Policy/RM Agenda Counsel, introduced the company’s AI ethics advisory process ‘CHEC’ (Consultation on Human-centered AI’s Ethical Considerations), launched in 2022. Designed as a collaborative policy, CHEC ensures that social perspectives are integrated into service planning and development rather than conducting unilateral checks after the fact.

Park emphasized that AI ethics can only become meaningful when understanding real-world contexts. He explained that Naver works closely with academic experts like SAPI to make AI ethics principles practical. Through the CHEC process, Naver tailors its policies to service developers’ perspectives, ensuring meaningful collaboration during product design and implementation.

The company also highlighted other policies that operationalize its AI ethics principles. In 2023, it published the “ClovaX Guide for Human-Centered Use,” which applies AI ethics principles to rapidly advancing generative AI technologies. This year, Naver launched the AI Safety Framework (ASF) to systematically identify, assess, and manage potential AI risks throughout its development and deployment processes.

Yong Lim, Director of SAPI and Professor at Seoul National University, underscored the event’s significance, noting that sharing practical approaches to integrating human rights-based policies into tech operations is essential. He committed to strengthening collaborations with policymakers and tech companies to expand rights-based AI governance.

Naver continues to establish global leadership in AI safety through international partnerships. The company contributed technical expertise to the UN’s AI safety report and supported the AI safety benchmarking project at MLCommons, a global AI consortium. In July, Naver became the first Korean member of C2PA (Coalition for Content Provenance and Authenticity), contributing to AI watermarking standards.

Jung-Woo Ha, Head of Naver’s Future AI Research Center, stated that Naver’s recognition as a global AI safety leader stems from embedding core AI technologies into its operations while maintaining close communication with service teams. He reaffirmed Naver’s commitment to securing advanced AI capabilities and fostering a safer, more sustainable global AI ecosystem.